You’ve seen it: an AI pilot dazzles in the demo, everyone applauds, and then nothing goes live.

McKinsey says 74 percent of pilots never launch, and IDC found only four of thirty-three prototypes escape proof-of-concept—burning through $500 K to $2 M each in the process.

Those failures drain budgets, stall morale, and let rivals who do deploy models grow revenue 2.5× faster.

Here’s why pilots stall and how seven proven providers close the gap.

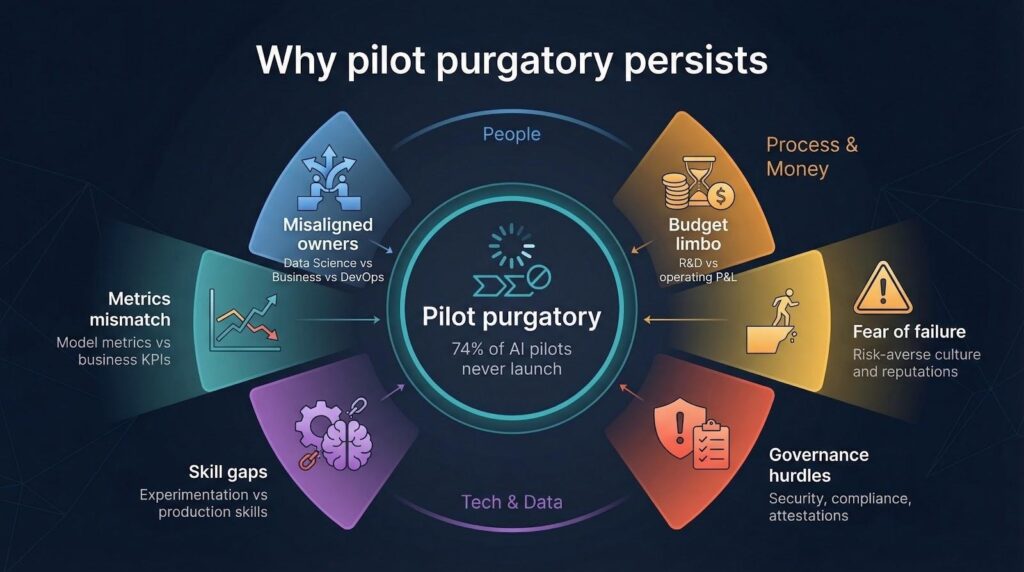

Why pilot purgatory persists

We blame technology for slow roll-outs, but stalled pilots are mostly a people problem.

Inside most enterprises, the data-science team owns the model, the business unit owns the outcome, and DevOps owns production. Three silos, three roadmaps, zero shared incentives. Progress stops the moment the demo ends.

Budget cycles add friction. A pilot’s cost sits on an R&D line item, yet production funding must come from an operating P&L. Finance asks for a business case the pilot team never scoped, and months vanish while fresh numbers are built. Through its TD SYNNEX Destination AI program, which unites more than 60 AI vendors with enablement resources and expert workshops, the company’s AI Game Plan session compresses that business-case scramble into a two-day sprint. The company’s 2025 Direction of Technology survey found that 45 percent of partners struggle most with translating AI promise into customer-specific value; AI Game Plan concludes with a ranked list of two or three high-impact use cases and a 90-day implementation roadmap, so Finance can approve funding while enthusiasm is still high.

Governance steps in next. Security teams request threat models, compliance teams ask for attestations, and that tidy Jupyter notebook suddenly needs auditable pipelines, role-based access, and change controls. The refactor feels like rewriting the project from scratch because, in practice, it is.

Talent gaps widen the crater. Many data scientists excel at experimentation but have never shipped containerized services, managed feature stores, or tuned CI/CD. Your seasoned software engineers may have never wrangled a large language model. Skills don’t overlap, so hand-offs misfire.

Then there’s the metrics mess. Pilots chase accuracy or BLEU scores; executives care about churn reduction and upsell rates. Without a translation layer, stakeholders struggle to see why a “state-of-the-art” model matters to the number they report to Wall Street each quarter.

Finally, culture kicks in. A pilot feels safe: low stakes, novelty bias, press-release excitement. A production launch feels risky: live customers, real SLAs, real reputations. Risk-averse leaders stall, hoping to avoid the spotlight if something breaks.

Put those misaligned owners, budget limbo, governance hurdles, skill gaps, fuzzy KPIs, and fear of failure together and you get the 74 percent graveyard we met earlier. Pilot purgatory isn’t one obstacle; it’s a perfect storm of small, solvable frictions that amplify one another.

The rest of this guide shows how the top providers break that storm and how you can, too.

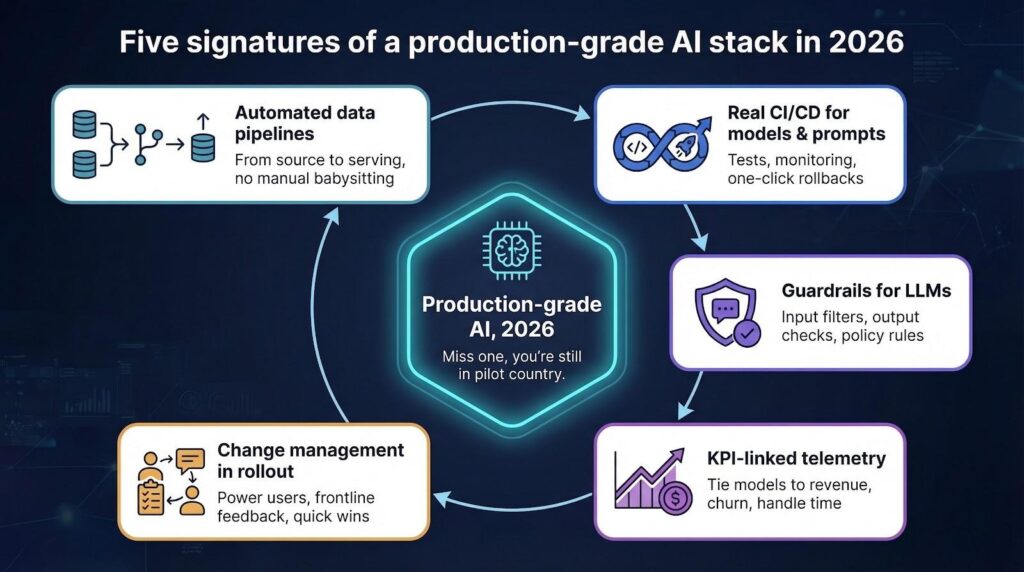

What production-grade looks like in 2026

Before we discuss vendors, we need a shared bar. A modern production-grade AI stack shows five clear signs. Miss even one and you are still in pilot country.

First, data pipelines run from source to serving without manual babysitting. Incremental updates flow through version-controlled steps, so yesterday’s model never trains on tomorrow’s schema.

Second, every code change, whether model, prompt, or feature logic, travels a real CI/CD path. Tests fire, monitors light up, and rollbacks happen with one click. That discipline turns experimental notebooks into software the business can trust.

Third, guardrails surround your large language models. Input filters, output checks, and policy rules keep the system safe from toxic prompts, secret leaks, and hallucinated facts.

Fourth, live telemetry ties model behavior to business KPIs. We track lift in revenue, drop in handle time, or any metric that moves the scoreboard. Accuracy alone no longer earns a seat at the table.

Finally, change management is baked into the rollout. Power users train early, frontline teams iterate on feedback, and leadership celebrates quick wins to keep momentum high.

When these five signatures work together, an AI project crosses from clever pilot to durable product.

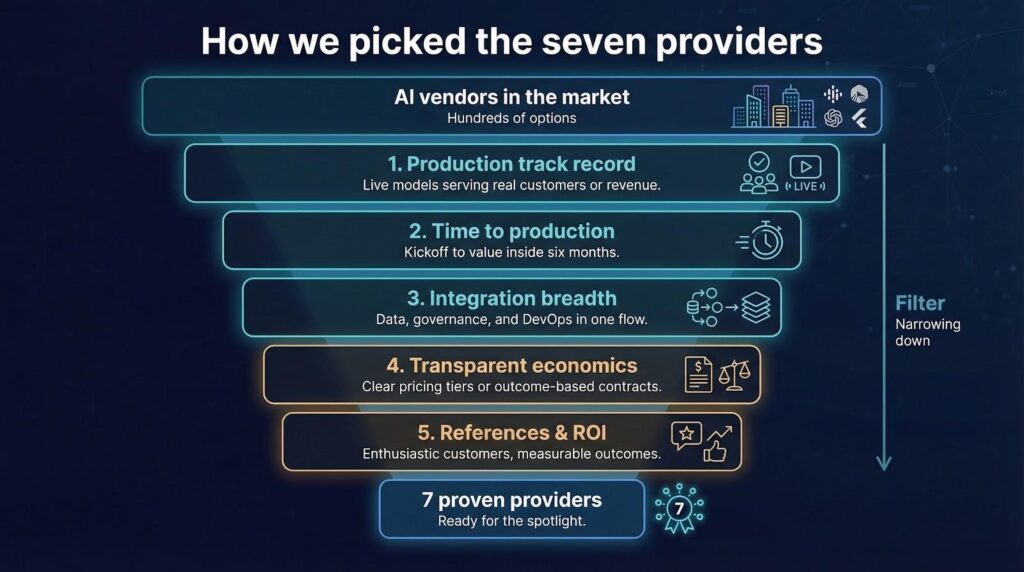

How we picked the seven providers

Selecting AI partners is not a popularity contest. We focused on proof, not buzz.

First, we looked for companies with a public record of shipping models into production at scale. Slide-deck demos did not count. Each vendor had to show at least three enterprise launches that touched live customers or revenue.

Next, we checked time to production. A strong partner moves from kickoff to value inside six months, so we dropped any firm without dated case studies to prove it.

Then we reviewed breadth of integration. The winners connect data ingestion, model governance, and DevOps in one flow instead of handing you a half-built bridge.

Transparent economics mattered, too. Hidden consulting hours slow momentum, so we prioritized partners that publish pricing tiers or outcome-based contracts.

Finally, we called references and asked direct questions about support, change management, and post-launch ROI. Only vendors whose customers spoke with measurable enthusiasm made the list.

That filter left seven names, each proven and ready for the spotlight.

Databricks: the lakehouse workhorse

If your data lives everywhere and nowhere at once, Databricks brings order to the chaos.

Databricks Lakehouse workspace screenshot for unified data and AI platform

The company’s Lakehouse architecture unifies raw ingestion, feature engineering, and model serving on one platform. You move from ETL to inference without hopping tools or copying data, so each hand-off that slows teams simply disappears.

Speed is Databricks’ calling card. One global insurer moved a fraud-detection pilot from notebook to production API in eighty-nine days, trimming claims leakage by eight percent in its first quarter. Engineers credit automated lineage tracking and a push-button model registry—release gates that feel like Git, not change boards.

Databricks also excels at governance. Fine-grained access controls and audit logs satisfy security teams, while Delta Live Tables prevent schema drift from sending bad data into the next batch.

Bottom line: if you want full-stack control without stitching ten tools together, Databricks gives you a dependable runway from pilot to daily business impact.

AWS Bedrock: genAI at enterprise scale

If your board keeps asking about large language models, Bedrock gives you a fast, governed yes.

AWS Bedrock console screenshot for enterprise generative AI at scale

Bedrock wraps multiple foundation models (Anthropic Claude, Amazon Titan, Cohere, and others) behind one API. You pick the model that fits the task, then rely on familiar AWS tools for security, logging, and autoscaling.

One European bank turned a chatbot proof of concept into a 24/7 customer-service assistant in just twelve weeks. Native integration with IAM and CloudWatch let compliance teams view every prompt and response in dashboards they already used, so approval came in days, not quarters.

Cost controls seal the deal. Usage caps and budget alerts stop surprise bills, and model choice flexibility lets you shift to lower-cost inference when traffic is light.

For enterprises already committed to AWS, Bedrock feels less like a pilot and more like adding a new region—familiar, compliant, and ready for production traffic.

Google Vertex AI: fast track for multimodal models

When your use case mixes text, images, and structured data, Vertex AI smooths the hand-offs.

Google built Vertex on the same backbone that powers billions of Gmail autocompletes. That scale shows. Pipelines spin up with pre-trained multimodal encoders, and a managed Feature Store feeds each modality without manual stitching.

A global retailer merged product photos, descriptions, and live inventory into a dynamic search assistant. The pilot reached sixty-four countries in one hundred five days and lifted average order value by five percent in the first quarter. Teams credit Vertex Pipelines, whose drag-and-drop DAGs generate fully versioned, repeatable builds.

Security is built in. Private Service Connect keeps data inside the VPC, and Context-Aware Access ties model endpoints to workforce identity rules already enforced in Google Workspace.

If your roadmap includes search that blends vision and language, Vertex gives you a single launchpad while keeping governance teams happy.

Microsoft Azure AI Studio: enterprise guardrails out of the box

Already a Microsoft shop? Azure AI Studio lets you connect OpenAI, Speech, and Vision models to existing apps without rewriting your security playbook.

The studio’s “grounded generation” pattern is the highlight. Add a retrieval endpoint to an OpenAI model, and the framework auto-injects citations, monitors toxicity, and logs every token for audit. Legal teams relax, product teams keep shipping.

One manufacturer deployed an internal copilot that drafts engineering change orders. Using Azure AI Studio templates, the team moved from SharePoint data crawl to live Teams integration in ninety-two days. Early metrics show a forty percent cut in document prep time and, crucially, zero compliance incidents.

Billing is familiar. Consumption meters land on the same Azure invoice your CFO already reviews, so no one needs a new vendor approval cycle.

For organizations deep in the Microsoft stack, Azure AI Studio feels like a natural extension of the cloud you run today. It is secure, monitored, and ready for production traffic.

Weights & Biases: experiment tracking that survives production

Pilots fail when no one remembers which model, dataset, or hyper-parameter combo produced the good results. Weights & Biases (W&B) fixes that memory loss.

Every run, whether local or in the cloud, streams metrics, artifacts, and configs into a searchable timeline. Product teams later promote the exact winning checkpoint to the W&B model registry, where CI jobs trigger container builds and smoke tests. No more “which notebook did we change?” debates.

A biotech startup moved a protein-folding model from research lab to FDA-validated workflow in thirteen weeks. Auditors accepted W&B’s immutable run history as evidence, cutting legal review time by thirty percent.

The interface stays light. Your data scientists add a four-line import, and dashboards appear in minutes. Yet the backend scales; Fortune 50 teams log millions of runs without hitting SaaS limits.

If your biggest gap is experiment lineage and reproducibility, W&B turns short-lived pilots into auditable, promotable assets.

Snowflake Cortex: analytics DNA meets generative AI

If your analysts already live in Snowflake worksheets, Cortex turns their SQL playground into an AI springboard.

Cortex embeds foundation models directly inside the Snowflake engine. Pass a table column into snowflake.ml.complete(), and you get summaries, classifications, or chat-ready embeddings, while data stays on the platform.

That architecture removed friction for a U.S. media group. Editors needed auto-generated show notes for 50,000 podcast episodes. Using Cortex, data engineers wrote three SQL lines, scheduled them in Snowflake Tasks, and shipped the feature to production in forty-four days. No export scripts, no new infrastructure, and a clear view from model cost to content-team ROI.

Governance is built in. The same row-level security that protects financial reports now guards prompt inputs. Usage logs sit beside existing cost views, so Finance can audit spend with a single query.

For data-centric teams who think in joins, not Dockerfiles, Cortex makes generative AI feel like just another SQL function, and that is the point.

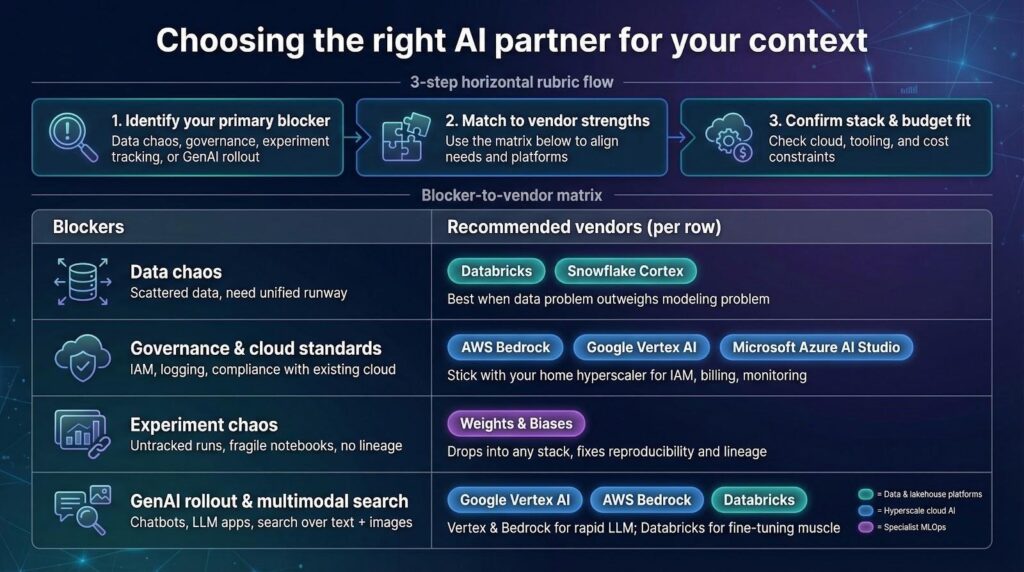

Choosing the right partner for your context

No two AI rollouts look alike. Your best-fit partner depends on where you sit today and where you need to land next quarter.

If you wrestle with scattered data and need an all-in-one runway, Databricks or Snowflake Cortex lock storage, features, and models into one governed loop. They shine when the data problem outweighs the modeling problem.

Already standardized on a hyperscale cloud? Stick with home turf. AWS Bedrock, Google Vertex AI, and Azure AI Studio mesh with the IAM, billing, and monitoring stacks your ops team already patrols. Less integration fuss, faster sign-offs.

Need surgical help with experiment chaos? Weights & Biases drops into any stack, bringing reproducibility without forcing a backend migration. Ideal for research-heavy teams who love their current infrastructure but hate version-tracking spreadsheets.

If multimodal or generative search features sit on your roadmap, Vertex and Bedrock lead on rapid LLM deployment, while Databricks offers fine-tuning muscle when you need full control.

Run this quick rubric:

- Identify your primary blocker: data chaos, governance, experiment tracking, or GenAI rollout.

- Match that blocker to the vendor’s core strength above.

- Confirm stack compatibility and budget fit.

Follow the alignment and you will pick a partner who accelerates rather than recreates your plan.